Sensors

Sensors are important for collecting information about surroundings. By default, all environments provide 3 basic sensors:

Lidar

SideDetector

LaneLineDetector

which are used for detecting moving objects, sidewalks/solid lines, and broken/solid lines respectively.

As these sensors are built based on ray test and don’t need graphics support, they can be used in all modes.

Also, you don’t need to recreate them again, as they are not binded with any objects until perceive() is called and the target object is specified. After collecting results, those ray-based sensors are detached and ready for next use.

You can access them at anywhere through the engine.get_sensor(sensor_id):

from metadrive.envs.base_env import BaseEnv

env = BaseEnv(dict(log_level=50))

env.reset()

lidar = env.engine.get_sensor("lidar")

side_lidar = env.engine.get_sensor("side_detector")

lane_line_lidar = env.engine.get_sensor("lane_line_detector")

print("Available sensors are:", env.engine.sensors.keys())

env.close()

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

Cell In[1], line 4

1 from metadrive.envs.base_env import BaseEnv

3 env = BaseEnv(dict(log_level=50))

----> 4 env.reset()

6 lidar = env.engine.get_sensor("lidar")

7 side_lidar = env.engine.get_sensor("side_detector")

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/envs/base_env.py:542, in BaseEnv.reset(self, seed)

539 assert (len(self.agents) == self.num_agents) or (self.num_agents == -1), \

540 "Agents: {} != Num_agents: {}".format(len(self.agents), self.num_agents)

541 assert self.config is self.engine.global_config is get_global_config(), "Inconsistent config may bring errors!"

--> 542 return self._get_reset_return(reset_info)

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/envs/base_env.py:568, in BaseEnv._get_reset_return(self, reset_info)

565 def _get_reset_return(self, reset_info):

566 # TODO: figure out how to get the information of the before step

567 scene_manager_before_step_infos = reset_info

--> 568 scene_manager_after_step_infos = self.engine.after_step()

570 obses = {}

571 done_infos = {}

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/engine/base_engine.py:462, in BaseEngine.after_step(self, *args, **kwargs)

460 assert list(self.managers.keys())[-1] == "record_manager", "Record Manager should have lowest priority"

461 for manager in self.managers.values():

--> 462 new_step_info = manager.after_step(*args, **kwargs)

463 step_infos = concat_step_infos([step_infos, new_step_info])

464 self.interface.after_step()

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/manager/base_manager.py:309, in BaseAgentManager.after_step(self, *args, **kwargs)

307 def after_step(self, *args, **kwargs):

308 step_infos = self.try_actuate_agent({}, stage="after_step")

--> 309 step_infos.update(self.for_each_active_agents(lambda v: v.after_step()))

310 return step_infos

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/manager/base_manager.py:402, in BaseAgentManager.for_each_active_agents(self, func, *args, **kwargs)

400 ret = dict()

401 for k, v in self.active_agents.items():

--> 402 ret[k] = func(v, *args, **kwargs)

403 return ret

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/manager/base_manager.py:309, in BaseAgentManager.after_step.<locals>.<lambda>(v)

307 def after_step(self, *args, **kwargs):

308 step_infos = self.try_actuate_agent({}, stage="after_step")

--> 309 step_infos.update(self.for_each_active_agents(lambda v: v.after_step()))

310 return step_infos

File ~/checkouts/readthedocs.org/user_builds/metadrive-simulator/envs/latest/lib/python3.11/site-packages/metadrive/component/vehicle/base_vehicle.py:254, in BaseVehicle.after_step(self)

242 my_policy = self.engine.get_policy(self.name)

243 step_info.update(

244 {

245 "velocity": float(self.speed),

(...)

251 }

252 )

--> 254 lanes_heading = self.navigation.navi_arrow_dir

255 lane_0_heading = lanes_heading[0]

256 lane_1_heading = lanes_heading[1]

AttributeError: 'NoneType' object has no attribute 'navi_arrow_dir'

Add New Sensor

To add new sensors, you should request them by using env_config.

If an sensor is defined as follows:

class MySensor(BaseSensor):

def __init__(self, args_1, args_2, engine)

Then we can create it by:

env_cfg = dict(sensors=dict(new_sensor=(MySensor, args_1, args_2)))

env = MetaDriveEnv(env_cfg)

The following example shows how to create a RGBCamera whose buffer size are width=32, height=16.

Note: for creating cameras or any sensors requiring rendering, please turn on image_observation.

from metadrive.envs.base_env import BaseEnv

from metadrive.component.sensors.rgb_camera import RGBCamera

import cv2

import os

size = (256, 128) if not os.getenv('TEST_DOC') else (16, 16) # for github CI

env_cfg = dict(log_level=50, # suppress log

image_observation=True,

show_terrain=not os.getenv('TEST_DOC'),

sensors=dict(rgb=[RGBCamera, *size]))

env = BaseEnv(env_cfg)

env.reset()

print("Available sensors are:", env.engine.sensors.keys())

cam = env.engine.get_sensor("rgb")

img = cam.get_rgb_array_cpu()

cv2.imwrite("img.png", img)

env.close()

Available sensors are: dict_keys(['lidar', 'side_detector', 'lane_line_detector', 'rgb'])

from IPython.display import Image

Image(open("img.png", "rb").read())

The log message shows that not only the rgb is created, but a main_camera is provided automatically, which is also an RGB camera rendering into the pop-up window. It can serve as a sensor as well.

Graphics-based Sensors

We provide the following sensors:

Main Camera

RGB Camera

Depth Camera

Semantic Camera

Using semantic camera as observation

from metadrive.envs import MetaDriveEnv

from metadrive.component.sensors.semantic_camera import SemanticCamera

import matplotlib.pyplot as plt

import os

size = (256, 128) if not os.getenv('TEST_DOC') else (16, 16) # for github CI

env = MetaDriveEnv(dict(

log_level=50, # suppress log

image_observation=True,

show_terrain=not os.getenv('TEST_DOC'),

sensors={"sementic_camera": [SemanticCamera, *size]},

vehicle_config={"image_source": "sementic_camera"},

stack_size=3,

))

obs, info = env.reset()

for _ in range(5):

obs, r, d, t, i = env.step((0, 1))

env.close()

print({k: v.shape for k, v in obs.items()}) # Image is in shape (H, W, C, num_stacks)

{'image': (128, 256, 3, 3), 'state': (19,)}

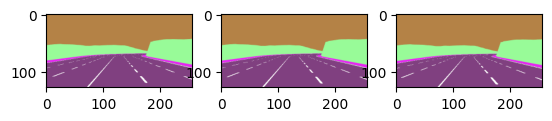

plt.subplot(131)

plt.imshow(obs["image"][:, :, :, 0])

plt.subplot(132)

plt.imshow(obs["image"][:, :, :, 1])

plt.subplot(133)

plt.imshow(obs["image"][:, :, :, 2])

<matplotlib.image.AxesImage at 0x7fe68b88bc40>

Retrieve semantic images

from metadrive.envs import MetaDriveEnv

from metadrive.component.sensors.semantic_camera import SemanticCamera

import cv2

import os

size = (256, 128) if not os.getenv('TEST_DOC') else (16, 16) # for github CI

env = MetaDriveEnv(dict(

log_level=50, # suppress log

image_observation=True,

show_terrain=not os.getenv('TEST_DOC'),

sensors={"sementic_camera": [SemanticCamera, *size]},

vehicle_config={"image_source": "sementic_camera"}

))

env.reset()

print("Available sensors are:", env.engine.sensors.keys())

cam = env.engine.get_sensor("sementic_camera")

img = cam.get_image(env.agent)

cv2.imwrite("semantics.png", img)

env.close()

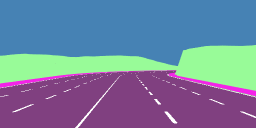

from IPython.display import Image

Image(open("semantics.png", "rb").read())

Available sensors are: dict_keys(['lidar', 'side_detector', 'lane_line_detector', 'sementic_camera'])

Demo on RGB camera

from metadrive.envs.base_env import BaseEnv

from metadrive.component.sensors.rgb_camera import RGBCamera

import cv2

import os

size = (256, 128) if not os.getenv('TEST_DOC') else (16, 16) # for github CI

env_cfg = dict(log_level=50, # suppress log

image_observation=True,

show_terrain=not os.getenv('TEST_DOC'),

sensors=dict(sementic_camera=[RGBCamera, *size]))

env = BaseEnv(env_cfg)

env.reset()

print("Available sensors are:", env.engine.sensors.keys())

cam = env.engine.get_sensor("sementic_camera")

img = cam.get_rgb_array_cpu()

cv2.imwrite("semantics.png", img)

env.close()

from IPython.display import Image

Image(open("semantics.png", "rb").read())